Managing a phpBB forum can be tricky when it comes to search engines. If not configured correctly, bots might index duplicate content, session IDs, or system files instead of your beautiful posts. To help the community and other site administrators, I am sharing my "perfected" robots.txt configuration specifically tailored for beautiful-romania.com which you can see is a forum too phpBB 3.3.x.

This configuration ensures that your sitemaps and feeds are prioritized while blocking "junk" URLs that clutter search results.

Code: Select all

User-agent: *

# 1. Allow Access (IMPORTANT: placed above blocking rules)

Allow: /app.php/sitemap*

Allow: /ucp.php?mode=privacy

Allow: /ucp.php?mode=terms

# 2. Allow RSS feeds so Google can see new posts quickly

Allow: /app.php/feed*

# 3. Block system pages and dynamic routes

Disallow: /adm/

Disallow: /cache/

Disallow: /includes/

Disallow: /language/

Disallow: /store/

Disallow: /common.php

Disallow: /config.php

Disallow: /cron.php

Disallow: /mcp.php

Disallow: /ucp.php

Disallow: /viewonline.php

Disallow: /search.php

Disallow: /app.php/

Disallow: /posting.php

Disallow: /*&uid=*

Disallow: /*?uid=*

Disallow: /*&bookmark=*

Disallow: /*?bookmark=*

Disallow: /*view=print*

# 4. Block links containing session IDs (sid)

Disallow: /*?sid=*

Disallow: /*&sid=*

# 5. Block highlighting links (hilit)

Disallow: /*?hilit=*

Disallow: /*&hilit=*

# 6. Block forum sorting (prevents duplicate content)

Disallow: /*&sk=

Disallow: /*&sd=

Disallow: /*&st=

Disallow: /*&sr=*

# Allow the rest of the content (posts and images)

Allow: /

# Sitemap: If you have one, add the link below, for example (remove '#' symbol from below when you add your sitemap link):

# Sitemap: https://your-website.com/sitemap.xml- Sitemap Priority: By placing Allow rules at the top, we ensure Google finds your map first.

- Duplicate Content Prevention: It blocks sorting parameters (sk, sd, st) which often create multiple URLs for the same topic.

- Clean Indexing: It prevents the indexing of personal session IDs (sid), which is a common issue with phpBB forums.

- Privacy & Terms: It keeps your mandatory legal pages indexable, which is a good signal for search engine trust.

Quick Tip: After uploading the file, don't forget to resubmit your robots.txt in Google Search Console (under the "Settings > Robots.txt report" section) and other search engine tools. This forces bots to see your clean configuration right away!

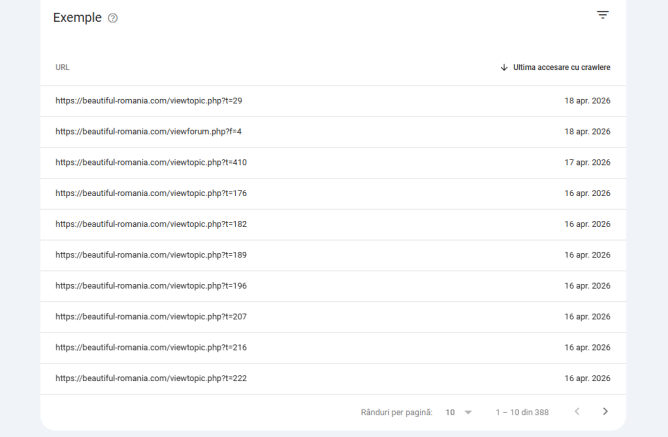

I let you a screenshot of how clean indexed links looks like in my Google Search Console after this robots.txt implementation:

Why is this essential for your forum? (The "Crawl Budget" Factor)

- Many administrators think that if Google has already indexed "wrong" links (like session IDs or print views), it's too late. This is not true.

- Optimization of Crawl Budget: Search engines only spend a limited amount of time on your site. If the bots are busy crawling 1,000 "junk" links created by session IDs or sorting, they might run out of "budget" before reaching your latest high-quality post. This configuration forces the bots to focus only on useful content.

- Cleaning the Index: Even if your search results are currently cluttered with bad links or redirects to login page (especialy because ucp.php indexing pages), implementing this robots.txt now is crucial. Once the bots see the Disallow rules, they will gradually stop visiting those URLs and, over time, Google will remove them from the index.

If you still need help or more information ,just reply here, and I will help you.